Large language models are trained on huge amounts of text data. They learn to understand the patterns and relationships between words and sentences. Based on that learning, they can generate new text that sounds coherent and relevant to the input you give them. It’s kind of like they’ve learned the grammar and flow of human language from all the text they’ve read.

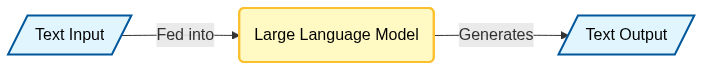

At its core, LLMs simply receive text as input and generate text as its output.